Chinese AI models overtake U.S. rivals in global token usage. /VCG

Chinese-developed artificial intelligence (AI) models have surpassed U.S. counterparts in global token usage for the first time, according to data released by AI aggregation platform OpenRouter.

This week OpenRouter rankings show MiniMax's M2.5 model leading global usage with about 1.7 trillion weekly tokens processed.

Google's Gemini 3 Flash Preview ranked second with about 997 billion tokens, followed by China's DeepSeek V3.2 at roughly 798 billion tokens.

Moonshot AI's Kimi K2.5 and Zhipu AI's GLM-5 also entered the global top tier, each recording more than 600 billion tokens in developer usage.

OpenRouter data indicate that Chinese models now occupy multiple positions among the world's most-used large language models, reflecting growing adoption by overseas developers.

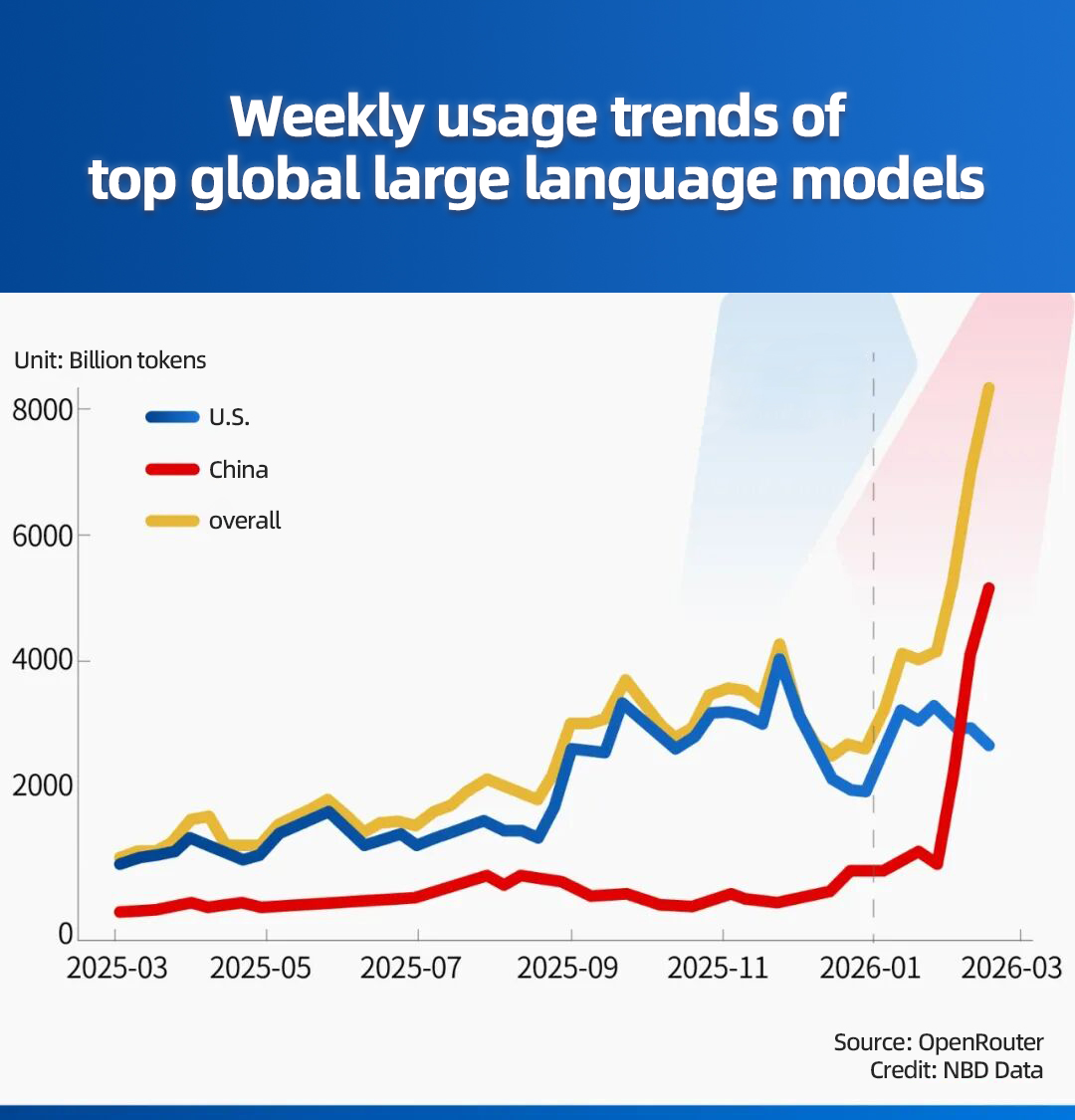

Statistics from National Business Daily (NBD) earlier on February showed Chinese models reaching about 5.16 trillion tokens in combined usage, compared with roughly 2.7 trillion tokens for U.S. models.

Weekly usage trends of top global large language models. /National Business Daily

Token consumption has emerged as a key indicator of real-world deployment, measuring how frequently models are integrated into applications, industry observers told NBD. The rankings highlight intensifying global competition in artificial intelligence, as developers increasingly prioritize large-scale deployment and usage efficiency alongside model capability.

User Center

User Center My Training Class

My Training Class Feedback

Feedback

Comments

Something to say?

Login or Sign up for free